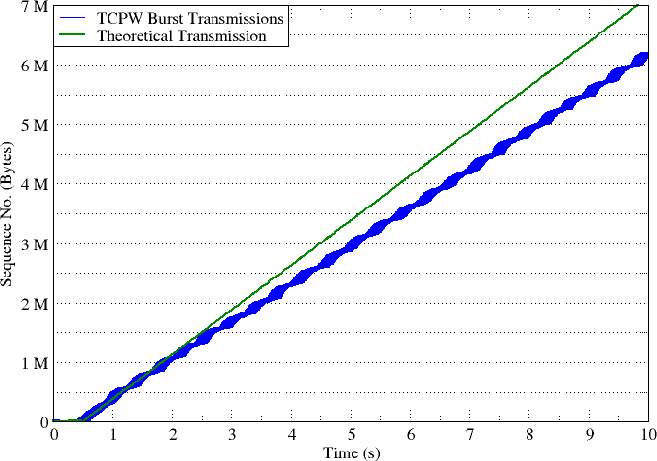

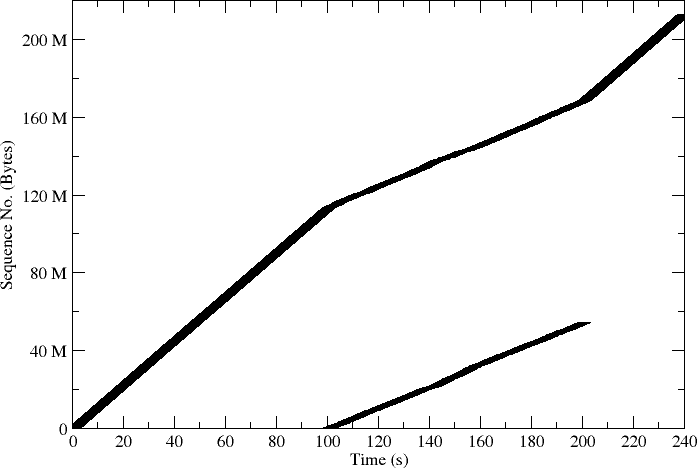

Fig1. - TCP Wave burst transmission.

TCP Wave is a new transport protocol designed as alternative to the standard TCP versions for current broadband networks, where a set of challenging communication aspects can impair performance: i.e. large latency links, handovers, and dynamic network switching. A full protocol algorithm and specification is presented [1].

TCP Wave envisages sender-side only modifications, guaranteeing fairness when sharing the bottleneck with standard TCP flows. Introducing an original design, TCP Wave is based on a burst transmission paradigm: the sender sends packets in bursts at time intervals scheduled by an internal timer (TxTime). This approach force the unmodified TCP receiver triggering acknowledgements (ACK) packets in trains, which carry information about the network congestion (from RTT measurements) and the link capacity (by measuring the ACK train dispersion, e.g., ACKs separation in time). TCP Wave sender relies on such ACK-based measurements to update its internal TxTime, to achieve the optimal transmission rate.

TCP Wave performance has been already assessed under different network and application configurations in [2] and [3], in simulated scenarios.

With this approach, TCP Wave makes possible to overcome the ACK-clocked window-based transmission followed by other TCP variants. In fact, the burst packet transmission is regulated by the ACKs feedback, but not directly triggered by them. TCP Wave sender implements a proactive rate control with a fast tracking to the available end-to-end capacity, not being affected by RTT or other network limitations.

The results on this page are obtained with the first release of TCP Wave code, implemented and validated on Linux kernel 4.12. Details on implementation, installation (including source code) and validation are provided hereafter.

*TCP Wave code is also available for ns-3 discreet network simulator (ver. 3.23): detailed ns-3 implementation and source codeThe development approach within the linux kernel, is aimed to add this new sender paradigm without deeply touching existing code. In fact, we added four (optional) new congestion control functions:

/* get the expiration time for the send timer (optional) */ + unsigned long (*get_send_timer_exp_time)(struct sock *sk);

/* no data to transmit at the timer expiration (optional) */ + void (*no_data_to_transmit)(struct sock *sk);

/* the send timer is expired (optional) */ + void (*send_timer_expired)(struct sock *sk);

/* the TCP has sent some segments (optional) */ + void (*segment_sent)(struct sock *sk, u32 sent);

and a timer (tp->send_timer) which uses a callback to push data down the stack. If the first of these function (get_send_timer_exp_time) is not implemented by the current congestion control, then the timer sending timer is never set, therefore falling back to the old, ACK-clocked, behavior.

Source code for the reference version of Linux TCP Wave can be found here. This source code is implemented for a specific target kernel version (4.12). The latest stable version of Linux TCP Wave is avaliable as well for for kernel 4.14 here.

$ git clone https://github.com/kronat/linux.git $ cd linux $ git checkout tcp_wave_12 $ [enable TCP_CONG_WAVE in the .config] build and install the new kernel

This procedure allowed to create all plots presented in next section "Expected rusults". The grace package (xmgrace) was used to generate figures.

Login as root.

# echo "wave" > /proc/sys/net/ipv4/tcp_congestion_control # journalctl -f -o short-monotonic > file.txt

# iperf -c iperf.eenet.ee -n 100Mfile.txt; Optionally, use this shell script to extract the logged systemd debug messages and plot some interesting graphs.

In this section, we report a selection of our test results for TCP Wave implementation on the Linux kernel 4.12. To get all results reported below, we used IPERF client from an Arch Linux virtual machine that has a TCP Wave enabled kernel. The IPERF client connects to a remote internet public IPERF server "iperf.eenet.ee", through an intermediate router that is used to create a custom bootlneck on the communication channel.

In the first test, IPERF establishes a single TCP connection from the client to the server, passing through a bottleneck channel with speed of 6Mbit/s.

Fig1. - TCP Wave burst transmission.

TCP Wave start up phase envisages a burst transmission using the default parameters: BURST0 (10 segments) and TXTimer0 (500ms). First update of the transmission timer occurs upon the reception of the first ACK train (at time 0.5s in Fig. 1 ). Since there are not competing flows, TCP Wave performs a stable burst transmission at almost the maximum rate of 6 Mbit/s (the maximum bootleneck capacity) using the TCP Wave tracking algorithm. Please note that access link capacity is much higher, and corresponding to the ethernel physical link. During the rate tracking protocol phase, measured RTT always oscillates between the propagation delay and propagation delay plus AckTrainDispersion (time distance for a full reception of ACKs associated to the same burst). As a result, TCP Wave dynamically variate its TxTime computation (as seen in Fig. 2), shaping a wave-like trend in the burst transmission (slowing down the rate by increasing of burst distance, and achieve a rate acceleration by decreasing the distance). By this wave effect, TCP Wave attempts to constantly achieve an equilibrium between congestion avoidance and the maximization of the available bandwidth. Theoretical line represents the throughput achieved by an uncontrolled flow of packets (e.g., UDP), representing the upper limit as channel goodput (thus including packets header). As clarification, any TCP will never reach such limit, due to the congestion control mechanism typically based on losses, or other mechanisms based on ACK reception. If this happens, it means that the TCP may be extremely unfriendly, or pushing the buffer to a great extent (very high increase on RTT).

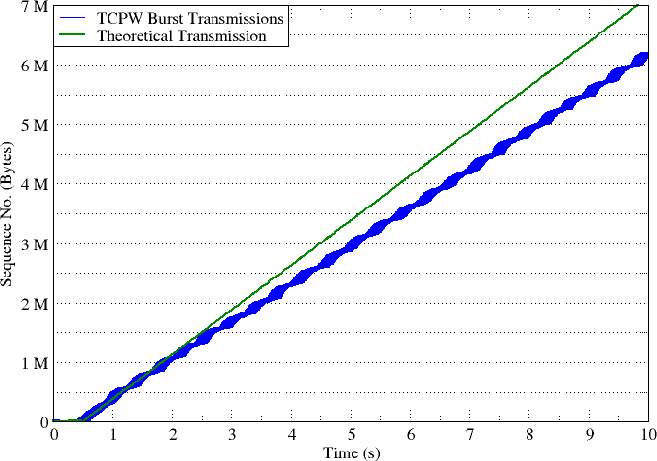

Fig2. - Variating the TxTime based on the measured ack_train_dispersion, and the experinced variation in RTT.

While in tracking mode, TCP Wave leverages on a continuous monitoring of both ACK train dispersion and RTT variations (DeltaRTT), in order to properly adjust transmission parameters. To this aim, the TxTimer is dynamically updated on the reception of any ACK train as a linear combination of:

AckTrainDispersion, which assesses the capacity of the overall end-to-end system to process a single burst. It represents the time interval among bursts to achieve the maximum rate allowed by the bottleneck link independently from the presence of concurrent traffic;DeltaRTT = avgRTT - minRTT, which is proportional to the forthcoming congestion. The higher is DeltaRTT, the heavier is the current network load.Therefore, the following equation has been used to update the TxTime:

TxTime = AckTrainDispersion + 0.5 * DeltaRTT

The 0.5 factor is justified because DeltaRTT increase is due to the joint effect of the target flow itself (pushig to the buffers and introducing RTT increase) and all possible competing flows. Then, the rate reduction is accounted only for one half to the target flow. This action has a threefold goal: to guarantee fairness among TCP Wave flows, to be friendly towards non TCP Wave flows and to guarantee an optimum channel utilization. Fig.2 demostrate the dynamic update of TxTime based on oscillation in the measured RTT and reception of regular ACK trains.

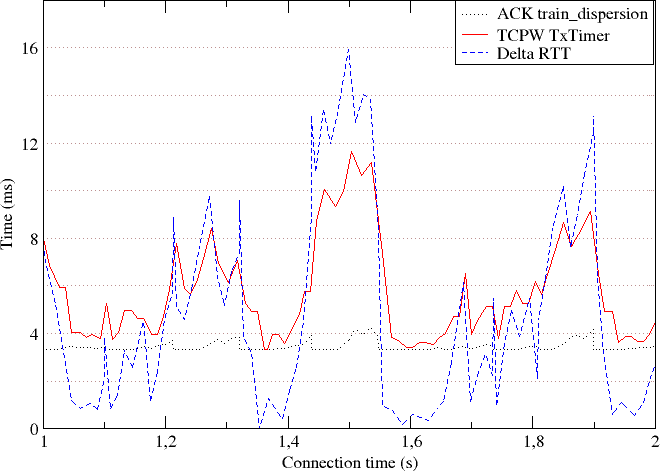

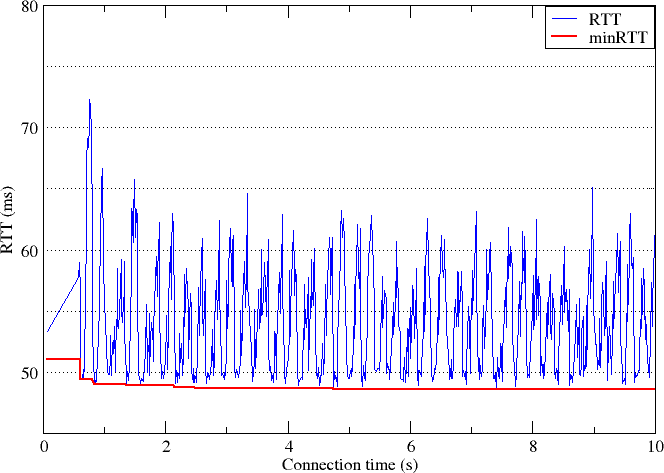

Fig3. - Measured RTT with a single TCP Wave connection.

RTT was monitored during the connection as meter of the induced congestion. As shown in Fig. 3, RTT cyclically returns to its minimum value immediatelly after periodical RTT spikes that are generated when reaching the maximum bottleneck capacity. This demonstrates how TCP Wave allows a complete control of the experienced RTT at the end-points, which is kept below a target estimable value. The maximum amplitude of peaks is only due to the cyclic utilization of the bottleneck buffer queue. By the way, knowing the maximum number of "long" flows and default TCP Wave parameters, it is possible to predict the maximum RTT value and accordingly to tailor the system; vice-versa, once known characteristics of the target communication environment, it is possible to find the better configuration for TCP Wave.

TCP Wave also achieves a good degree of fairness among competing flows. Fairness is managed through the Tracking algorithm, which inflates TxTime, using the measured DeltaRTT. To assess both TCP Wave fairness and capacity utilization, a test scenario has been set up. Four long transfers over TCP Wave connections start and stop at different times. While a the first connection "connection 1" is transmitting for a duration of 240s, the second connection "connection 2" is scheduled to start at time 100s and for a duration of 100s.

Fig4. - Burst transmission of two TCP Wave connections.

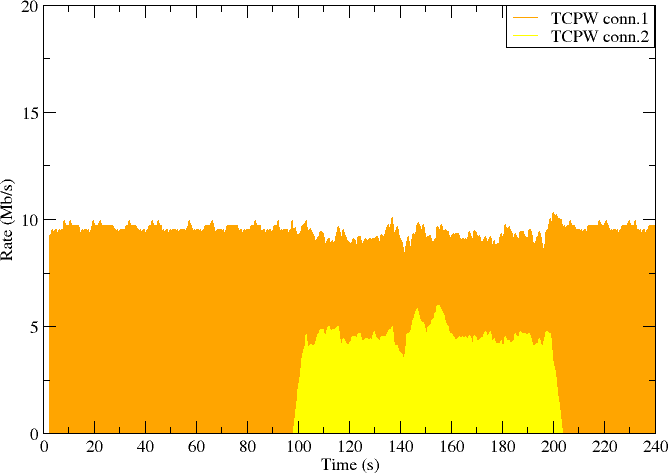

Fig5. - Fairness of the channel utilization by two TCP Wave connections.

As shown in Fig. 4 and Fig. 5 , in the first 100s, a single TCP Wave flow is running achieving the full rate of 10 Mbit/s. In the time interval 100-200s, the burst sequence number trends of the two flows go in parallel, demonstrating a similar transmission rates (around 5 Mb/s, each). This is because, each TCP Wave flow experiences in practice the presence of the other one by continuously modify the actual TxTime in use, originating a sort of oscillation in the burst transmission timing. At time 200s, connection2 end its transmission and again connection1 suddenly gains the full capacity.

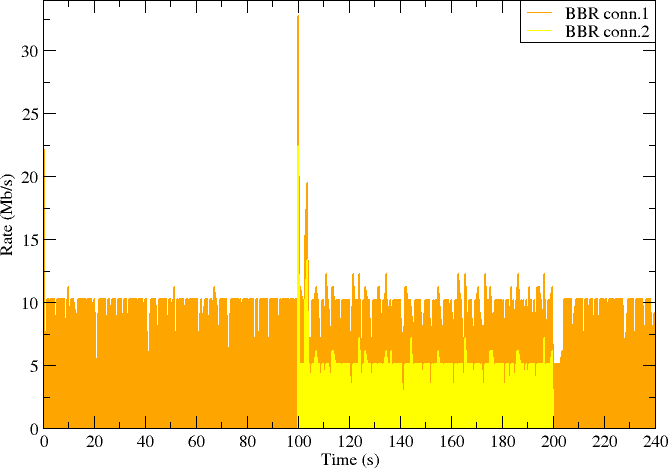

Fig6. - Fairness of the channel utilization by two BBR connections.

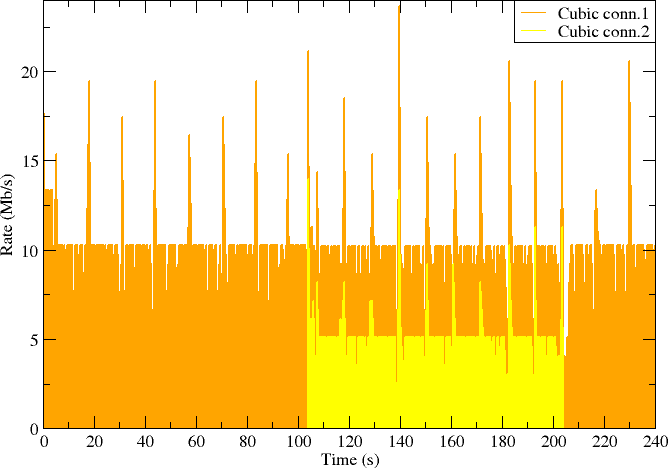

Fig7. - Fairness of the channel utilization by two TCP Cubic connections.

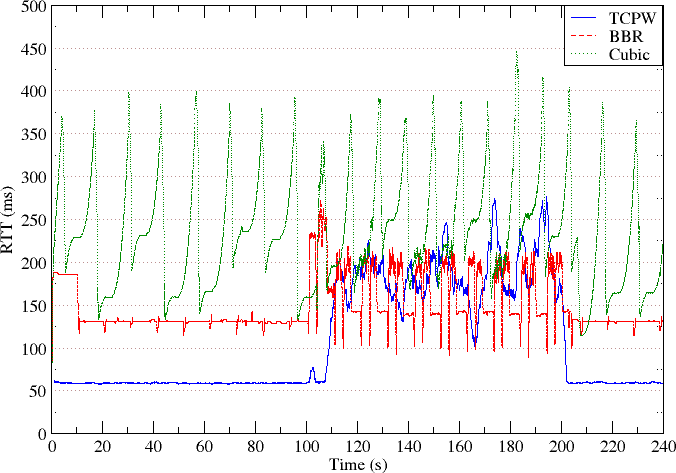

Fig8. - Comparing the effect of TCP Wave, TCP BBR, and TCP Cubic on the experinced RTT of the communication channel.

The source code and working principles of TCP Wave are designed and developed by Satellite Multimedia Group at university or Rome "Tor Vergata". You must keep within the source code the original copyright notes, including reference to our research group and the contact author roseti .at. ing.uniroma2.it in all extension/modification that will be done. Please, in case of achieving pubblications in journals and/or conferences, cite [1] as reference for your work. Full references are included in next section. TCP Wave code for Linux is signed-off-by: Natale Patriciello natale.patriciello .at. gmail.com , tested-by: Ahmed Abdelsalam ahmed.said .at. uniroma2.it.